Review: Can Microsoft Compete? Part I

Intro, layers, silicon, infrastructure, and ROI/FCF

Summary

What it does: enterprise software.

Elevator pitch: arguably one of the finest franchises ever built, with deep distribution advantages that let it deliver innovations cheaply to a huge customer base.

Mental model: moat (read about my mental models here).

Valuation and potential returns: 21x June 2027 EPS estimates and growing EPS 20% per year.

Exchange and ticker: Nasdaq, MSFT

Stock price and market cap: $401, $3.2tn.

Do I own it? Yes.

IR website: here.

About this blog: I have been investing for 25 years, professionally and personally. I look for stocks that have a high probability of compounding at 15% for at least 5 years with limited downside. I write these stocks up on my blog. You can find more about me, my philosophy, my mental models, and my portfolio structure on my site.

Disclaimer: This post is for informational and educational purposes only. Building Arks is not licensed or regulated to provide any financial advisory service and nothing published by Building Arks should be taken as a recommendation to buy or sell securities, relied upon as financial advice, or treated as individual investment advice designed to meet your personal financial needs. You are advised to discuss your personal investment needs and options with qualified financial advisers. Building Arks uses information sources believed to be reliable, but does not guarantee the accuracy of the information in this post. The opinions expressed in this post are those of the publisher and are subject to change without notice. The publisher may or may not hold positions in the securities discussed in this post and may purchase or sell such positions without notice.

Introduction

I have owned Microsoft since 2010. What gave me the confidence not to sell - even as the company transformed and the multiple expanded - was a deep conviction in Microsoft’s competitive moat. Not its technology. I don’t think technology ever constitutes a sustainable advantage in itself. The moat was distribution. Microsoft sells to enterprise. Enterprises move slowly. Microsoft is deeply embedded: enterprise IT departments are built on its products, and it has a huge reseller network reaching millions of SMEs. Microsoft leverages this difficult-to-replicate incumbency by copying innovations (it is the quintessential second-mover) and bundling them cheaply alongside existing products. This has been remarkably hard for standalone competitors to fight.

Distribution + time to react + cheap bundling = a moat full of crocodiles.

Does AI change this?

Satya

Satya Nadella is the CEO of Microsoft and the chief architect of its renaissance over the last 15 years. Two things he has said over the years rattle round my brain:

The software industry - and by implication, Microsoft - has no franchise value.

With agentic AI, Microsoft’s TAM is every process in every organisation.

Both of these comments are mind-blowing. One is a stark warning. The other is exceptionally bullish. They appear contradictory, yet both are right. This makes analysing Microsoft a fascinating challenge.

Why I wrote this

Microsoft is a largeish position for me, and I have a long term time horizon - 5 years at minimum, and ideally 10 years or more. However Microsoft’s business is changing rapidly as AI alters its competitive landscape. I needed a better framework for understanding how the industry is evolving. A vast amount has been written about this, although a lot of it is very short term (Azure only grew 39% in 2q26, not 40%!). This piece is an attempt to synthesise what I have read into a framework for interpreting newsflow over the next few years: what will be the signs that Microsoft’s competitive position is strengthening, or weakening?

I am a generalist, not a tech expert. This can make interpreting short term newsflow harder, but might make interpreting long term trends easier, because what will drive long term trends is not the latest tech advance but classic competitive dynamics. The key principle is simple: if too many companies can do a thing, pricing will fall until the return on capital equals the cost of capital. The main beneficiaries of innovation will be the customer, not the innovator.

This piece focuses on each layer of the AI tech stack and whether long term competitive advantage will be carved out in any of them (or by combining any of them). It does not address the idea that AI drives the value of everything to zero and software is dead. This is deliberate. I see limited evidence in favour of this theory so far. On the contrary, AI seems to be strengthening the classic tech competitive advantages of network effects and access to unique data. In addition I think it is almost impossible to analyse this thesis sensibly given the comprehensive advances in technology that would be necessary and the unpredictable ways incumbents might find to defend themselves. I do not dismiss the possibility, but rather than letting it scare me out of investing in the greatest productivity advance in human history I prefer to think through the wider impacts and find ways to hedge. But that’s a separate topic entirely.

This article will be published in 3 parts:

Introduction, layers, silicon, and infrastructure.

LLMs and agents.

Distribution, combining layers, and conclusion.

Layers

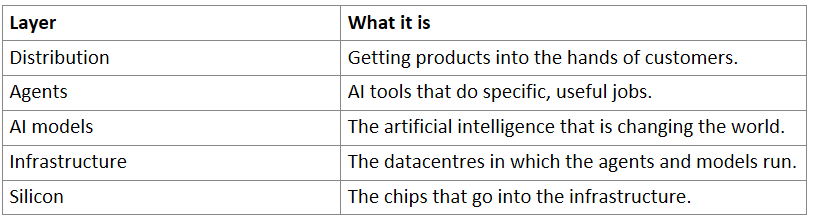

There are multiple layers in the AI future:

We need to frame whether real competitive moats can be created within each layer or by combining layers. And to do that, we need to start at the bottom.

Silicon

Having the cheapest compute is a clear advantage in the AI race. Evidence for this is already showing up in earnings releases. The undisputed champion of highly advanced general-purpose AI silicon is Nvidia and it does not look like this will change any time soon. All AI infrastructure builders will probably need buy Nvidia for a long time. This levels the playing field to some degree, because they’re all using the same chips for large parts of their workload.

That said, by designing silicon in-house hyperscalers like Microsoft can keep pressure on Nvidia pricing and maximise infrastructure efficiency by tightly integrating chip design with the hardware and software that comprises the rest of the system. One of the core themes of this article is that several layers of the AI stack will at least partly commoditise, and in commoditised industries efficiency is the key competitive battleground.

Amazon, Google, and Microsoft all have their own in-house silicon design programmes which are emerging as core competitive advantages and significant businesses in their own right. Microsoft’s programme is currently the laggard. It unveiled its first chip in late 2023, several years after Amazon and Google. It is therefore promising that Microsoft’s Maia 200 chip, released in January 2026, already appears to be competitive with Google and Amazon’s inference chips. However, the data comes from Microsoft’s internal testing, the exact parameters for these tests have not been released, and there is limited third party validation. In addition, Microsoft’s production volumes are much smaller than Google’s or Amazon’s.

In short: this is a weak point for Microsoft but they are addressing it. Given the importance of inference efficiency, what we know about Maia, and the Microsoft programme’s comparative immaturity, I think Microsoft is likely to close the competitive gap rather than slip further behind. I think all 3 companies will have highly competitive silicon, and this will be a competitive advantage against compute providers who don’t have in-house silicon. I am less certain that any of these 3 companies will develop a lasting silicon advantage over their peers.

Infrastructure

The hundreds of billions being spent on AI infrastructure are the stuff of legend. Hyperscalers have little choice but to build today: the world is short compute, and if they don’t build it they can’t sell AI to their customers. That is death. Nonetheless, there are two big questions regarding this spend:

Will current spending produce a worthwhile return on investment?

Does owning infrastructure confer a lasting competitive advantage?

I think the answers are yes and maybe.

Infrastructure question 1: ROI

Here’s how today’s capex could earn a poor ROI:

Supply gets overbuilt.

Demand collapses.

Obsolescence.

The supply overbuild argument is predicated on the idea that rising capex multiplied by improving efficiency = explosive growth in compute capacity. Currently, all the evidence is that more capacity is needed: hyperscalers say they are capacity constrained, Microsoft has to choose between allocating capacity to internal uses and selling it to customers, and older generations of chips aren’t being retired, suggesting they can still be rented out for attractive prices even though better chips are available. So far, so good. But will this last?

Bears point to the huge overbuild of fibreoptic capacity in the dot-com bubble as an obvious analogy. The problem wasn’t just that capacity was overbuilt initially; two additional factors combined to make things worse. One was that the capacity bottleneck was the send-receive technology at either end of the cable. Rapid improvements in cheap send/receive technology therefore multiplied capacity for years after the cables were installed. The second factor was that fibreoptic cables last decades if they’re installed well. As a result, overcapacity never self-corrected even as internet traffic exploded.

The datacentre buildout looks very different to my eyes. This is partly because there are clear braking factors on capacity growth, notably power availability, chip supply, and the fear of overbuying one generation of chips before a more efficient one comes along. Also, when we break the capex into its component parts some important differences with the fibreoptic buildout emerge. Roughly:

15% goes on land and buildings. This is the component that looks most like fibreoptic cables: the compute capacity that goes in the buildings will keep getting better and the buildings will last decades. But even if a few too many get built, the loss on the excess capacity won’t be total: land and buildings can be repurposed and retain value.

25% goes on electrical and cooling equipment. In the event that capacity is overbuilt this will suffer significant impairment, although much of it can be repurposed/used for parts.

60% goes on the actual compute, including GPUs. This is where most of the capex is going and it is where the fibreoptic analogy really breaks down. Although software improvements can meaningfully improve the performance of installed chips, there is no bottleneck equivalent to send/receive in fibreoptic. Instead there is a hard ceiling set by the physical hardware, so capacity cannot multiply for years after installation. And the useful life of chips, while subject to much debate, is far shorter than that of fibreoptic cable. As a result, compute capacity degrades over time rather than compounding. Even if capacity gets overbuilt, this will bring supply and demand back into balance relatively quickly.

What about demand collapse? The bearish argument is that training frontier models has an unproven ROI, and if investors get tired of pumping cash into OpenAI and Anthropic (in particular) they will have to cut back on training their next generation models. However, I think the risk of demand collapse is diminishing:

Inference has grown from 1/3rd of compute usage in 2023 to 2/3rds in 2026. Inference is driven by adoption of AI, not training new models. In my opinion, adoption has barely begun. Even if training spend drops, inference will keep growing, and compute built for training can be retasked to run inference workloads (albeit less efficiently).

It is becoming increasingly clear that AI has vast real-world uses. OpenAI and Anthropic are growing revenues rapidly - Anthropic has grown annual recurring revenue 30x in 15 months and 3x in the last 4. This might be the fastest revenue ramp in technology history. These companies are nowhere near self-funding, but as revenues grow their dependency on investors falls and their attractiveness to investors rises.

Finally, obsolescence is the idea that if tomorrow’s data centre is vastly more cost-effective than yesterday’s, then tomorrow’s datacentre will set pricing and yesterday’s will struggle to earn a decent ROI. What great leaps forward will render today’s capacity obsolete? Two possibilities come to mind. The big one is the efficiency of leading-edge chips. These will inevitably obsolete older chips. That’s a problem if the step up from one generation to the next is huge, and if the generations come in rapid succession. The evidence so far is the opposite: chips remain competitive long enough to more than earn their cost of capital. My guess is this will continue, but of course it is linked to demand: the combination of a huge step up in chip efficiency and a significant slowdown in demand would render a generation of chips obsolete. That said, this would be a one-time event and would collapse the cost of compute, stimulating demand and raising margins in other parts of the business. It’s a risk, but not an existential one.

The other potential great leap forward is some paradigm shift, such as Elon Musk’s goal of putting datacentres in space to benefit from 24/7 solar power and infinite radiative cooling into deep space. While this is theoretically coherent, it is a wildly complex idea to deliver cheaply. To put it politely, I suspect we are in for a wait.

In conclusion: the risk of a short to medium term ROI collapse is likely overstated by the bears. Demand is growing ahead of supply and may do for years. Indeed, the real risk is not spending, not being able to sell AI, and losing customers that can’t be regained. Nonetheless, at some point supply and demand will meet. ROI will compress, but capacity degradation will correct this fairly quickly. Also, any reduction in ROI on infrastructure may be offset by margin improvements in the layers that use compute. More on this in Part II.

Infrastructure question 2: does owning infrastructure confer a lasting competitive advantage?

Question 1 dealt with the short to medium term ROI on current capex. Question 2 is about long term ROI on infrastructure: does owning infrastructure confer competitive advantage such that ROI stays high, or does infrastructure commoditise?

There doesn’t seem to be much competitive advantage in owning the “bare metal” - the land, buildings, cooling systems, racks, and chips that make up a datacentre. But there is competitive advantage in integrating it all together. Running 100,000 GPUs as a coherent unit with optimised interconnect, utilisation, cooling, and so on is extraordinarily hard, and doing it better drives significant efficiency gains. Microsoft, Amazon, and Google have spent a decade learning how to do this. A new entrant cannot replicate this knowhow overnight even if they buy the same chips. But whether this edge is sustainable is another matter. We are in the early innings of a massive buildout. Multiple players are throwing capital at this and need to solve it if they are to survive. I think it is likely that the optimisation gap between the leaders and followers will narrow over time.

The other way to think about this is by workload. Infrastructure for frontier models and the most demanding large-scale inference needs to be optimised to perfection and there are genuine gaps between the leaders and the rest. On the other hand, infrastructure for standard inference done by midsize models is already commoditising. Competition is mainly on price and reliability. In addition, as small models improve and chips get more energy-efficient, inference will move to the edge driven by advantages in latency, security, and cost. (In other words, for simple tasks models will run on your computer or phone rather than in a datacentre.)

These two trends - the narrowing of the optimisation gap, and the relentless forward march of the frontier leaving ever more commoditised inference in its wake - suggest to me that over time, more and more infrastructure will become commoditised. If so, a new utility industry will emerge selling commoditised compute with a mix of long term contracts and instantly-available capacity at spot. There may be competitive advantage in owning frontier infrastructure, but as the industry matures hyperscalers like Microsoft will be able to choose what they own and outsource the rest.

This has implications for ROI and free cash flow. The market sees the hyperscalers ramping capex and worries that ROIC and FCF must fall. I think this framing might be wrong. What’s really happening is that the hyperscalers are incubating a second business. While AI is compute constrained, hyperscalers must own compute in order to guarantee supply and sell AI. But in the long term, the decision to own compute will be driven by ROI. If there is long term competitive advantage in owning compute, the ROI will stay high and the hyperscalers will retain ownership. If not, utility compute can be separated from the original capital light businesses, either by selling/spinning compute or simply by reducing capex and buying third party compute. My money is on at least partial commoditisation and separation.

What really matters, therefore, is that the original capital light business is still there, still profitable, and has a vastly expanded TAM. We will explore this in Part II.

Links to previous Reviews

Thanks for reading - if you enjoyed reading this please subscribe, like, and restack, and do get in touch if you have questions.

Pete